Donghwan Rho

donghwan_rho[at]snu.ac.kr

Hello. I am currently a Staff Engineer at Samsung Electronics. I received my Ph.D. in Department of Mathematical Sciences, Seoul National University under the supervision of Ernest K. Ryu.

I am interested in:

- Efficient implementations of language models under Homomorphic Encryption (especially CKKS)

- Mathematical reasoning of LLMs

- Interpretability of language models

GitHub / Google Scholar / LinkedIn

Feel free to contact me anytime to discuss research!

News

| Feb 27, 2026 | I have completed my internship at CryptoLab and joined Samsung Electronics as a Staff Engineer. |

|---|---|

| Dec 08, 2025 | I have started a research internship at CryptoLab. |

| Nov 11, 2025 | I gave a talk at the Seoul National University Research Institute of Mathematics Symposium. |

| Oct 23, 2025 | I gave a talk at the Korean Mathematical Society Annual Meeting. |

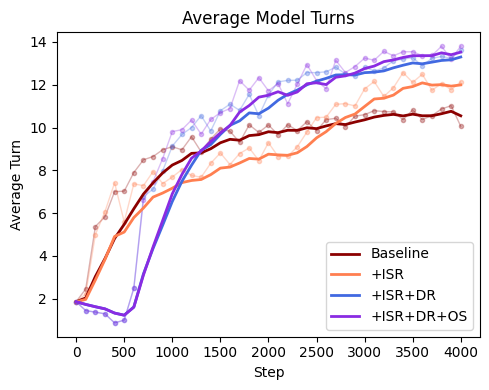

| Oct 15, 2025 | Our paper Studying the Korean Word-Chain Game with RLVR: Mitigating Reward Conflicts via Curriculum Learning is now available on arXiv. This is a work in progress. |

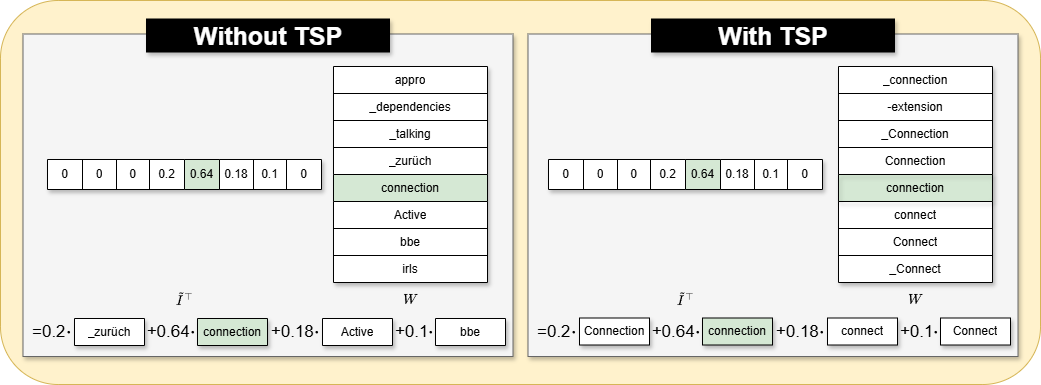

| Oct 15, 2025 | Our paper Traveling Salesman-Based Token Ordering Improves Stability in Homomorphically Encrypted Language Models is now available on arXiv. |

| Jul 10, 2025 | I have been selected as an outstanding TA for the Introduction to Computer Programming and Artificial Intelligence for Scientists course this semester. |

| Jun 19, 2025 | I passed the Ph.D. defense. |

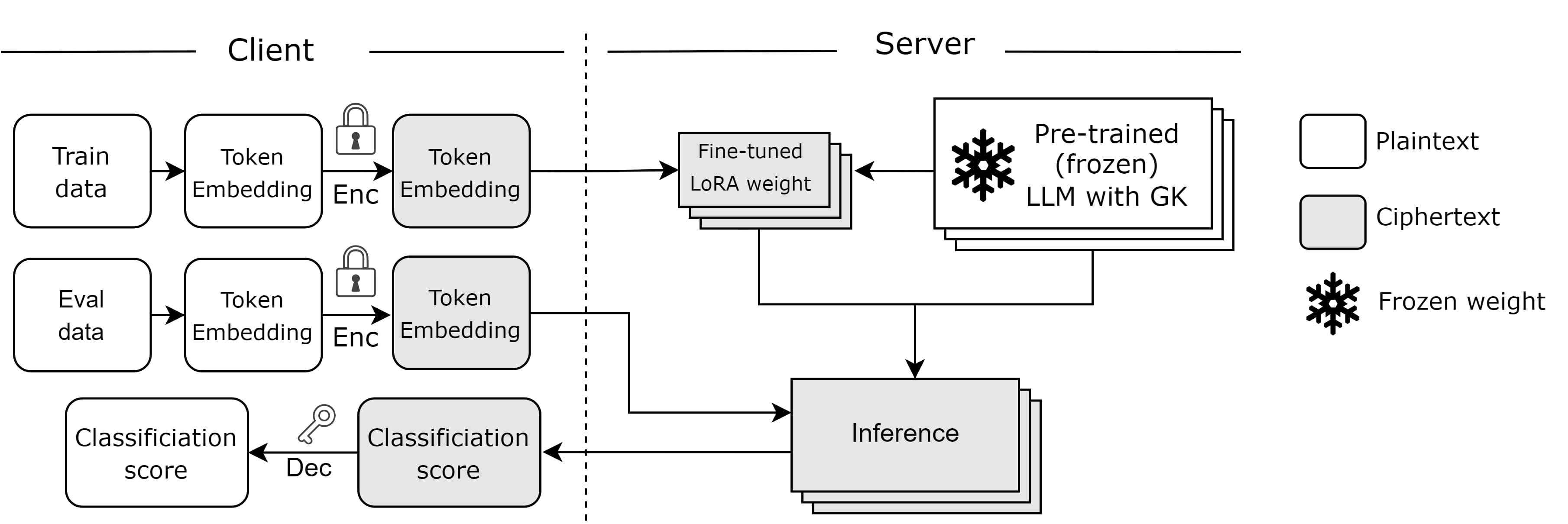

| Jan 23, 2025 | Our first paper Encryption-Friendly LLM Architecture is accepted at ICLR’25. |